The Zero-Trust Solution

PrivacyScrubber acts as an Invisible Shield for your AI chats. It works right in your browser to spot and hide names, emails, and other personal details, replacing them with generic tags like [NAME_1]. This matches the clever approach used in SOC 2 AI privacy — keeping the "brain" of the AI helpful while keeping your identity hidden. When the AI answers, just click 'Reveal' and your original details are put back instantly, 100% locally on your own computer.

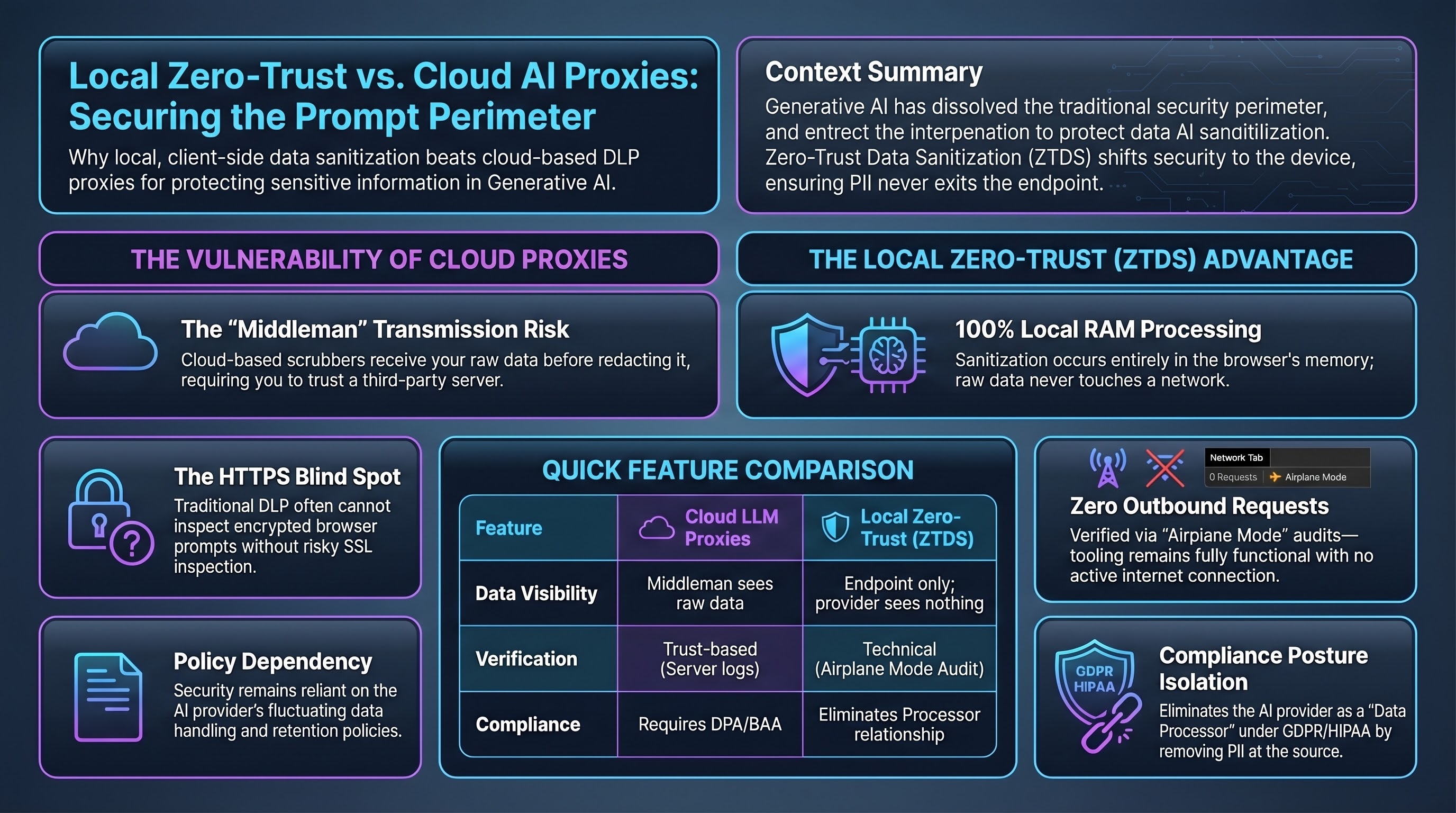

You don't have to take our word for it. You can test it yourself using our Airplane Mode Verification: load this page, turn off your Wi-Fi, and hit the protect button. It works perfectly without the internet, which is the gold standard for LLM firewall protection and personal safety. If it works offline, you know your data is staying with you.