Zero-Trust Client-Side vs. Cloud APIs.

Zero-Trust Comparison Sanitization

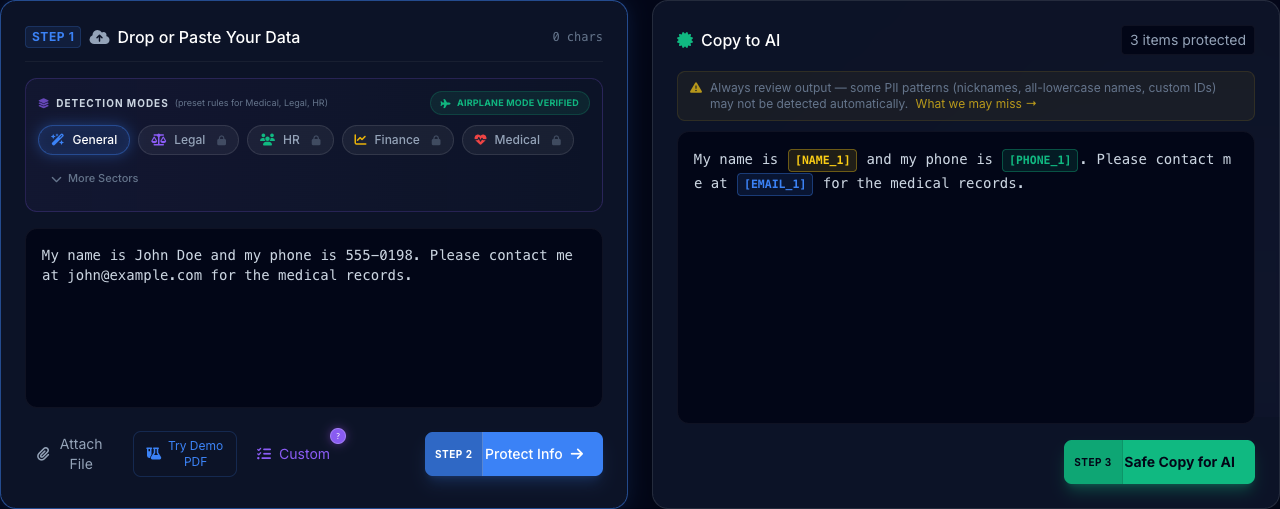

Watch the PrivacyScrubber engine transform sensitive Comparison data instantly. No API calls, no cloud latency, 100% private.

COMPLIANT

READY

ALIGNED

800-53

Deploy Zero-Trust AI Workflows

Equip your team with the world's first air-gapped protection layer. No cloud history, no LLM training leakage, just provably secure AI.

- 100% Client-Side Processing

- Airplane Mode Verified (Pure Offline)

- Enterprise-wide Chrome MDM Rollout

- Centralized Policy Control Center

- Advanced Pattern Detection Engine

AI Summary / Key Takeaways

"Architectural comparison between zero-trust client-side sanitization and legacy cloud DLP APIs. PrivacyScrubber eliminates the transmission risk inherent in cloud-based scrubbers by redacting data locally, ensuring absolute data sovereignty and superior network performance with zero latency."

Enterprise-Grade AI Privacy

Add custom redaction rules and priority support with PRO.

Executive Summary: COMPARISON

Understanding the difference between 'Server-Side' and 'Local-Side' privacy is the most important choice you will make for your data. Cloud-based PII scrubbers still transmit your 'raw' data to their servers before redacting it, which means you are still trusting a middleman. PrivacyScrubber is zero-trust: your data never leaves your RAM until it is already anonymized. Our comparison guides show exactly why browser-side processing is the only way to satisfy the compliance requirements of 2026.

Privacy Checkpoints

- Middleman Risk: Cloud scrubbers see your data before they hide it.

- Zero-Trust Proof: Use the Network Tab to verify that no raw data ever leaves your screen.

- latency vs Safety: Local processing is faster and harder to breach.

- Transmission Gap: Don't trust 'we delete logs' — trust 'no data sent'.

PII Detection Matrix

| Entity Type | Exposure Risk | Local Edge Control |

|---|---|---|

| Raw Data | Critical (Transmission) | Local RAM Processing |

| Session Logs | High (Retention) | Zero Server Storage |

| API Latency | Medium (Efficiency) | Browser-Level Speed |

How the PrivacyScrubber Engine Solves This

Interactive Tool Controls for Comparison. Hover for specs.

Zero API Latency

Cloud-based DLP intercepts add seconds of delay. Client-side processing takes milliseconds.

- Engine WASM-Accelerated

- Privacy 100% Local RAM

- Security Zero-Server Leak

No Supply Chain Risk

Unlike cloud DLP solutions, if our servers were hypothetically compromised, your data would remain unaffected. We don't have it.

- Engine WASM-Accelerated

- Privacy 100% Local RAM

- Security Zero-Server Leak

Compare Edition Features

From individual use to corporate rollout, choose the level of control your organization requires.

| Core Capabilities |

Free

Web Only

|

PRO

$15/mo or $110 Lifetime

|

TEAMS

$99/mo

|

|---|---|---|---|

| 100% Local Processing (Airplane Mode) | |||

| Text Paste & Single File Docs | |||

| Batch Processing & Background OCR | — | ||

| Custom Regex & Specific Redaction Rules | — | ||

| Chrome Extension Native App | — | — | |

| Silent Corporate Deployment (MDM) | — | — | |

| Policy Control Center & Enforcement | — | — | |

| Try Free | Details | Deploy TEAMS |

Comparison Compliance Library

Detailed workflows for sanitizing PII in Comparison environments.

PrivacyScrubber vs ChatGPT Temporary Chat

Compare PrivacyScrubber local processing vs ChatGPT temporary chat. Which protects your data better?

Web App vs Browser Extension for AI Privacy

Why a web-based PII protector is safer than browser extensions for protecting AI input data.

ChatGPT Privacy Settings

Are ChatGPT privacy settings, memory toggles, and temporary chats truly enough to protect your data? Why local processing is safer.

Is Claude Safe for Confidential Data? Claude vs PrivacyScrubber

Anthropic Claude stores and may review your prompts. Learn if is Claude safe and how to protect data locally before you paste.

Google Gemini Data Privacy

Google Gemini can use your prompts to improve its models. Learn what data Gemini collects and how to protect confidential information before using it.

Privacy Focused AI Tools

A deep dive into privacy-focused AI tools and why client-side PII scrubbing is superior to trusting third-party server promises.

Best Tool to Protect & Secure PII for LLMs

Compare the best tools to protect PII for AI. Why a free local PII protector beats cloud APIs.

PrivacyScrubber vs Nightfall AI

Compare PrivacyScrubber local processing vs Nightfall AI cloud webhooks. Which zero-trust data loss prevention tool is best for your enterprise?

PrivacyScrubber vs Microsoft Purview AI Hub

Microsoft Purview AI Hub requires complex E5 licensing and cloud syncing. Compare it with the lightweight, zero-trust PrivacyScrubber local engine.

Local Browser Redaction vs Cloud DLP Webhooks

Architectural comparison: Why processing PII locally in the browser is more secure than sending it to a Cloud DLP webhook API for redaction.

Open Source PII Scrubbers vs PrivacyScrubber

Analyzing Presidio, Amazon Comprehend, and other open-source PII scrubbers versus the turn-key local PrivacyScrubber zero-trust engine.

The Best AI Privacy Tools for 2026

As LLM adoption scales, trusting black-box APIs with sensitive PII is no longer viable. Explore our definitive comparison of the best privacy-focused AI tools and discover why finding the right AI data privacy platform requires shifting from cloud webhooks to zero-trust client-side processing.

PrivacyScrubber vs Amazon Macie for AI Prompts

Compare PrivacyScrubber local browser redaction vs Amazon Macie. Why scanning S3 buckets is too slow for real-time ChatGPT masking.

PrivacyScrubber vs Google Cloud DLP

How PrivacyScrubber local processing compares to Google Cloud DLP webhooks. Achieve Zero-Trust Data Sanitization without proxy latency.

PrivacyScrubber vs Microsoft Presidio

Compare Microsoft Presidio implementation overhead with PrivacyScrubber out-of-the-box local data protection for GenAI.

ChatGPT Enterprise vs Local PII Scrubber

Is ChatGPT Enterprise necessary for privacy? Compare OpenAI Enterprise data policies against 100% local, Zero-Trust data sanitization.

Claude for Work vs Local PII Anonymization

Compare Anthropic Claude for Work data governance with client-side Zero-Trust data masking to completely isolate your internal PII.

PrivacyScrubber vs AWS Comprehend Medical

Compare PrivacyScrubber local PHI redaction vs AWS Comprehend Medical. Why zero-server architecture wins for clinical note AI safety.

PrivacyScrubber vs. Enterprise Cloud DLP

Compare PrivacyScrubber zero-trust local redaction vs Enterprise Cloud DLP (Nightfall, AWS Macie). Learn why browser-side processing is safer for AI.

Zero-Trust vs. Server-Side Data Masking

Understand the critical security difference between Zero-Trust client-side sanitization and traditional server-side data masking for LLMs.

ChatGPT Enterprise Data Controls vs. Client-Side Sanitization

Does ChatGPT Enterprise protect your PII? Compare OpenAI's enterprise privacy settings with 100% local, air-gapped data sanitization.

Comparison Technical Compliance Library

Deep architectural mapping of Zero-Trust Data Sanitization (ZTDS) controls to industry-specific regulatory standards.

Zero-Trust Verification Signature

The above technical controls are enforced deterministically by the PrivacyScrubber Local Engine. All redaction cycles generate zero server-side telemetry, satisfying global data residency requirements for Comparison institutions.

Hardened Audit Standards

Satisfying strict global security and privacy frameworks.

No data persistence on untrusted infrastructure.

Privacy by design at the engineering layer.

Data masking as a core organisational control.

Federal PII minimisation and transparency controls.

Satisfies Safe Harbor de-identification requirements.

Verified by the Enterprise Board

Our 10-persona AI team ensures Comparison compliance at every layer.

"PrivacyScrubber eliminates Shadow AI risk by intercepting PII at the edge. We've mapped this hub to SOC 2 Type II and ISO 27001 masking controls."

"Under GDPR Article 32 and HIPAA Safe Harbor, local anonymization removes the AI provider from the 'Data Processor' chain, negating complex DPA liabilities."

"A single GLBA or PCI-DSS violation costs 100x more than a site-wide license. We provide verifiable ROI through data loss prevention at the prompt level."

The Comparison AI Privacy Gap

Data Persistence

Raw sensitive inputs are often stored by AI vendors for model training.

Compliance Liability

Uploading unredacted PII violates industry-specific global privacy mandates.

Shadow AI Risk

Employees using unvetted AI tools create invisible data leakage vectors.

Raw Input: Sensitive Information here

Sanitized: Sanitized [PII_1] here

Secure Comparison AI Workflow

Enable high-performance AI without client data leaving your machine

Import Files

Upload documents locally into the PrivacyScrubber sandbox.

Local Masking

Identify and tokenize sensitive strings entirely within browser memory.

Analyze with AI

Submit sanitized prompts to ChatGPT or Claude for processing.

Reverse Scrub

Restore original values into the AI response locally for the final draft.

Protocol: The 5-Step Airplane Mode Audit

Don't trust us. Trust the laws of physics. Follow this audit procedure to verify zero-server PII sanitization for Comparison workflows.

Load the tool: Open PrivacyScrubber.com in your browser.

Go Offline: Disconnect your WiFi or enable Airplane Mode. The site remains fully functional.

Process Data: Paste a sensitive comparison document and run the scrubber.

Inspect Network: Open Developer Tools (F12) and check the 'Network' tab. Verify 0 requests were made.

Verify Local RAM: All comparison identifiers stay in your transient browser memory—never stored, never logged.

ZTDS Compliance Verified

All redaction patterns on this page are optimized for local-first execution. 100% GDPR, HIPAA, and CCPA compliant by design.

Frequently Asked Questions

Common questions about deploying zero-trust AI for Comparison Teams.