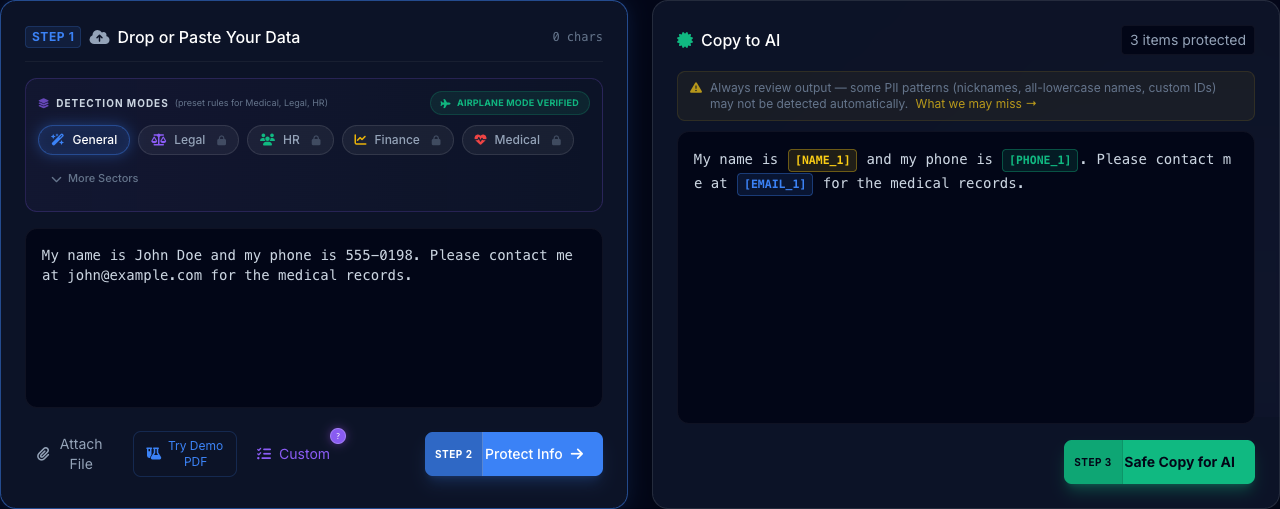

"The AI Safety Action Guides provide immediate technical procedures for organizations to neutralize PII leakage without blocking researcher velocity. These hubs focus on specific 'Playbooks' for data protection: from instruction-set masking to procedural sanitization of corporate records. PrivacyScrubber's zero-trust layer acts as the foundational control point for all AI safety actions, ensuring that researchers can innovate while the organization remains audit-proof."

Strategy Insight for AI Leadership

Scaling AI adoption within AI environments requires a fundamental shift in data governance. Our enterprise AI solutions ensure that while teams leverage high-velocity LLMs, the underlying action data remains fully sovereign. This solution integrates directly with your AI industry guides to provide a seamless privacy layer.

The core challenge for AI leaders is balancing utility with liability. Standard Cloud DLP filters often strip too much context or require trust in third-party servers. PrivacyScrubber's zero-trust model for Zero-Trust execution preserves the semantic structure of your prompts locally, ensuring that AI reasoning remains accurate while personally identifiable information (PII) is deterministically masked.

AI Critical Compliance Vulnerabilities

Implementing safe AI protocols without slowing down engineering velocity is a primary challenge for modern CTOs.

Manual redaction of instruction sets and system prompts is error-prone and leads to accidental intellectual property leaks.

Deploy deterministic, local-first safety actions to automate PII sanitization across all corporate AI playbooks.

Action Vector Analysis & Risk Scenarios

Identifying the primary data exfiltration paths for Action workflows using generative AI models.

Action Input Neutralization

"AI Safety Action Guides provide step-by-step technical procedures for neutralizing PII leakage across corporate AI workflows. Each playbook maps to specific compliance controls for immediate enterprise deployment."

Instantly mask Action identifiers in text, PDF, and DOCX files locally before transmission to any AI provider.

Hardware-level verification ensures no data packets leave your browser RAM session during the redaction process.

Audit Roadmap: Legacy Cloud-DLP vs. ZTDS

| Strategic Metric | Legacy Cloud-DLP | ZTDS (PrivacyScrubber) |

|---|---|---|

| Data Perimeter | Transmitted to Cloud API | 100% Local (Client-Side) |

| Processing Latency | 500ms - 2500ms (Network) | < 15ms (Native JS) |

| Security Posture | Trust-Based (SLA/BAA) | Math-Based (Zero-Server) |

| Compliance Status | Subject to Cloud Audit | Audit-Exempt (Local-Only) |

The Airplane Mode Standard

Disconnect your network, enable Airplane Mode, and watch PrivacyScrubber maintain 100% operational integrity. This is not just a feature—it is a mathematically verifiable proof that your AI records never leave your control.

Solving AI Challenges with Enterprise Governance

Scale Zero-Trust Data Sanitization across your entire organization with centralized enforcement and native browser integration.

CISO / Compliance

In the AI sector, enforcing Zero-Trust is paramount. With the PrivacyScrubber Chrome Extension, administrators seamlessly deploy data masking via MDM to all endpoints. Preventing local model leakage ensures that when employees use GenAI, sensitive action records are never exfiltrated to external LLM servers, instantly satisfying compliance and governance audits.

Operations Lead

AI organizations require agile collaboration without compromising privacy. The Enterprise Governance model features encrypted Session Sharing, allowing CISOs and managers to securely distribute custom Regex dictionaries across the department. This enforces uniform data redaction standards across all GenAI workflows, eliminating human error while maintaining high velocity in team-based AI adoption.

Edge Analyst

Daily action operations rely on continuous efficiency. The native extension automates PII scrubbing directly at the browser input field, ensuring analysts never waste time manually censoring data. This seamless integration provides zero friction and zero server latency, empowering end-users to confidently leverage ChatGPT and Claude for immediate AI insights.