The Autonomous Pipeline Privacy Gap

Make/Zapier Webhook Leaks

Automation nodes often pass raw CRM data or customer support tickets directly to external AI LLM APIs, exposing PII to cloud vendor logging.

RAG Vector Store Poisoning

Loading un-masked internal documents into vector databases for RAG creates permanent, indexed caches of sensitive employee and client data.

Autonomous Secret Exposure

Coding and DevOps agents consuming CI/CD logs or internal wikis may accidentally feed architectural secrets and API keys to third-party LLMs.

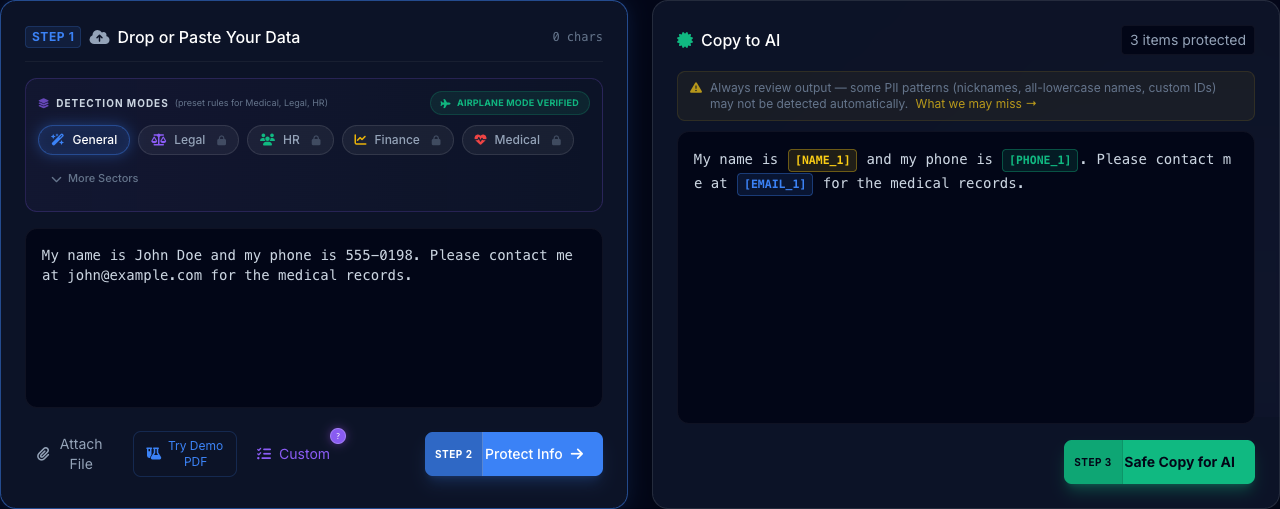

Input: Client Smith (A-123) is overdue.

Input: [NAME_1] ([ID_1]) is overdue.

Secure Agent Architecture

Modular data protection for autonomous AI systems

Intercept Payload

Before triggering any AI node, capture the raw input stream at the orchestration layer.

Local Masking

PrivacyScrubber redacts PII locally, replacing identities with deterministic tokens.

Secure LLM Call

Send only the sanitized, anonymous data to the LLM model for processing.

Reverse Scrub

Bring back original data into the AI's response locally before final delivery to the user.